Backpropagation Program

Видео: Backpropagation primer. Practice Quiz: Multilayer perceptron. Notebook: Tensorflow_task.ipynb.. Established in 1992 to promote new research and teaching in economics and related disciplines, it now offers programs at all levels of university education across an extraordinary range of fields of study including business, sociology, cultural studies, philosophy, political science, international relations, law, Asian studies, media and communications, IT, mathematics, engineering, and more. H Алгоритм обучения многослойной нейронной сети методом обратного распространения ошибки (Backpropagation) Из песочницы. Алгоритмы, Программирование. Neural network class. * An object of that type represents a neural network of several types: * - Single layer perceptron; * - Multiple layers perceptron. * * There are several training algorithms available as well: * - Perceptron; * - Backpropagation.

. Опубликовано: 3 апр 2017. Let's discuss the math behind back-propagation. We'll go over the 3 terms from Calculus you need to understand it (derivatives, partial derivatives, and the chain rule and implement it programmatically. Code for this video: github.com/llSourcell/howtodomathfordeeplearning Please Subscribe! That's what keeps me going. I've used this code in a previous video.

I had to keep the code as simple as possible in order to add on these mathematical explanations and keep it at around 5 minutes. 3 месяца назад URGENT HELP NEEDED!!!!! Function pred,t1,t2,t3,a1,a2,a3,b1,b2,b3 = grDnn(X,y,fX,f2,f3,K)%neural network with 2 hidden layers%t1,t2,t3 are thetas for every layer and b1,b2,b3 are biases n = size(X,1); Delta1 = zeros(fX,f2); Db1 = zeros(1,f2); Delta2 = zeros(f2,f3); Db2 = zeros(1,f3); Delta3 = zeros(f3,K); Db3 = zeros(1,K); t1 = rand(fX,f2).(2.01) -.01; t2 = rand(f2,f3).(2.01) -.01; t3 = rand(f3,K).(2.01) -.01; pred = zeros(n,K); b1 = ones(1,f2); b2 = ones(1,f3); b3 = ones(1,K);%Forward Propagation wb = waitbar(0,'Iterating.' 6 месяцев назад So because I've never been so hot on calculus, just to clarify: the delta of a particular neuron (from which we get the adjustment for the weight of each input from the previous layer by multiplying this delta by each input value, right?) is found by multiplying the sum of the weighted deltas of the next layer by the gradient of this layer (which in the case of the sigmoid function would be x.(x-1) where x is the output of the current neuron).

Is this right? I'm building a simple neural network in Max/MSP and trying to wrap my head around how multiple layers work. 8 месяцев назад Hello, i'm supposed to be learning this at my university but we only got the theory of this (math), so now that i need to program this i have a lot of trouble doing so; thankfully i came across this video that helped me a lot getting down into code.

Still need some help understanding a couple things about this video: 1.- As seen in the video, the input is a 2D array, i have trouble 'visualizing' this: So far i have understood when our inputs have a single value (not an array), then these go into the neurons, using a sum of each Xi.the weight that goes form Xi to Hj, for example say X1=X2 = 1, and w11=0.3, w21 = 0.4, then H1 = 1.0.3 + 1.0.4; since in this example input is an array X1 = 0, 0, 1; X2=1, 1, 1. I have trouble seeing this step. 2.- The dot product, i kinda see it this way: We are calculating not the nlayer neurons, but the outputs directly, which would make sense, putting it y=SUM(hi. Wij), so this way we see directly the output we get from our net, is this right? Год назад @ There is a bit of glossing over detail on part of the subject I see a number of confused people posting on Stack Overflow or Datascience Stack Exchange. Namely that you don't backpropagate the.error.

value per se, but the gradient of the error with respect to a current parameter. This is made more confusing to many software devs implementing back propagation because usual design of neural nets is to cleverly combine the loss function and the output layer transform, so that the derivative is numerically equal to the error (specifically only at the pre-transform stage of the output layer). It really matters to understand the difference though because in the general case it is not true, and there are developers 'cargo culting' in apparently magic manipulations of the error because they don't understand this small difference.

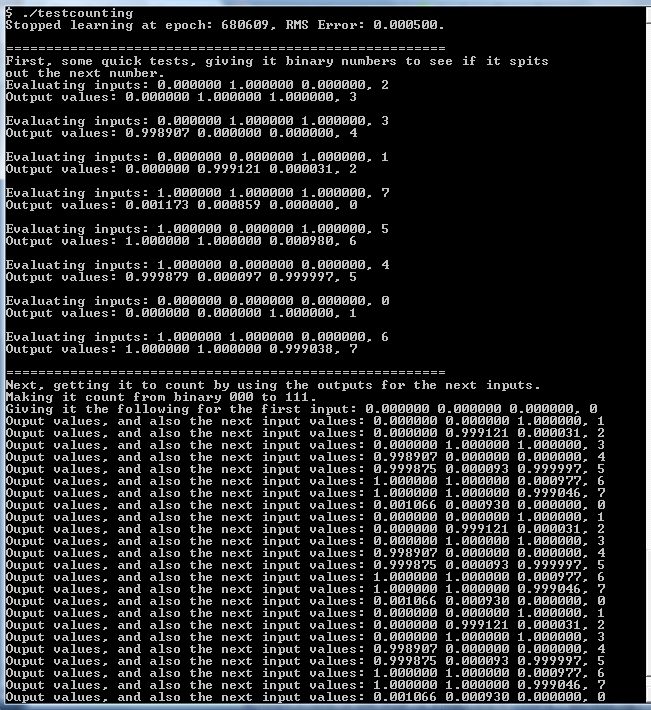

Описание Backpropagation A simple example of backpropagation algorithm. Learn small neural network basic functions like predefined examples: AND, XOR or 2D distance. Import and export of custom tasks from and to xml or well readable csv. Integration with www browser. Creating new or editing loaded tasks in an editor is also possible. You can show the network anatomy and all weights and also the result with marked training set or exact output for set input.

If learning failes, restart whole learning algorithm or just single neuron weights. Alpha learning speed is automatic and configurable. Help on each screen. This program may be useful for students of a basic course of artificial neural networks. It illustrates well that learning of neural networks is a complex task and basic backpropagation without any improvements can only solve very simple tasks.